“If you needed an icon for a supercomputer, you would use the Cray-1,” says Dag Spicer, senior curator at the Computer History Museum, where the computer is spending its retirement. “It blew people’s minds. It was so powerful, so fast.”

Of course, in today’s terms, “It’s roughly equivalent to a first generation iPhone from Apple,” says Spicer.

[jwplayer config="QUEST Audio Player" skin="http://ww2.kqed.org/quest/wp-content/themes/quest/glow.zip" file="http://www.kqed.org/.stream/anon/radio/quest/2011/06/2011-06-27-quest.mp3" ]

Listen to the QUEST radio story The Future of Supercomputers

The reason we don’t play Angry Birds on a supercomputer today is thanks to something called Moore’s Law.

“Moore’s law is a predication made by Intel cofounder Gordon Moore in 1965 that the number of transistors – that is the little switches that make up a computer – the number of transistors incorporated in a chip will double approximately every 12 months,” says Spicer. Moore later amended that timeline to every 18 months.

What that means is computer chips have gotten smaller and faster at an incredible rate over the last 40 years. Which leads us to a supercomputer known as Hopper.

Today's Supercomputers

“This is our new Cray XE6 supercomputing system,” says John Shalf, a computer scientist at Lawrence Berkeley National Lab. We’re standing next to row after row of tall black computer towers inside a building in downtown Oakland. The sound of the computer’s massive cooling system is deafening.

“You have to keep it cold or it’ll melt. We’ll have a puddle of chips on the bottom of the floor,” says Shalf.

Hopper is the eighth largest supercomputer in the world. And right now, it’s chewing on some complicated problems. “Number one here is particle accelerator design. We have fusion energy and then we also have laser plasma inertial fusion simulation,” says Shalf.

“Science has just really been revolutionized by the speed of computers,” says Kathy Yelick, associate director for computing sciences at Berkeley Lab. She says scientists use Hopper to simulate everything from black holes to climate models. There’s a special term to measure this supercomputer’s power: a petaflop.

“So how fast is that?” says Yelick. “Most people can do probably about one arithmetic operation per second if they’re pretty good.”

Now imagine asking a billion people on the planet to do one math problem per second. To get to Hopper’s speed, “we would need a million earths,” she says.

A million earths, each with a billion mathematicians – that’s how fast Hopper is. But it won’t be long before a faster model comes along. “Every four years we get a system that’s about 10 times larger than one we put in three or four years earlier” says Yelick.

According to Moore’s Law, those next generation supercomputers should be faster and more compact. But John Shalf says computer chips have hit a wall.

The End of Moore's Law?

“The problem is now we can’t make them go any faster. So we can cram more things on the chip, but if you make them go fast, it’s so hot they’ll melt.”

If chips themselves aren’t faster, supercomputers will simply have to add more and more of them to increase computing power. And that comes with a very big impact on the energy use.

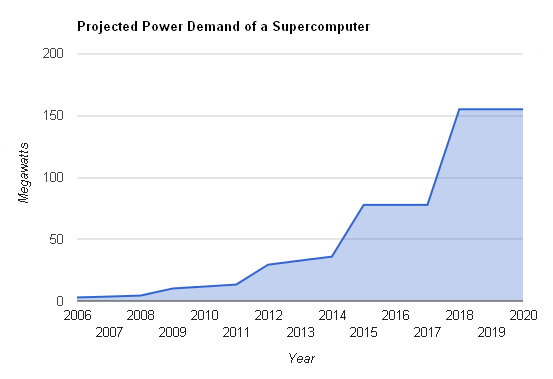

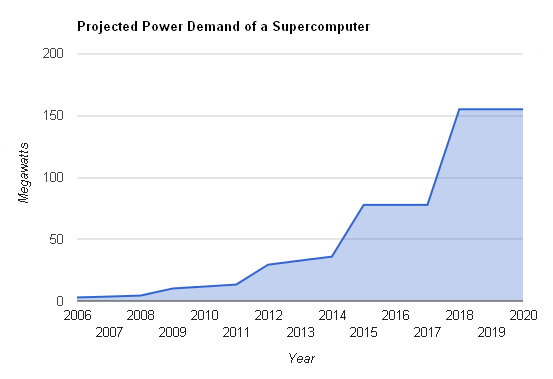

Hopper uses around 3 megawatts of electricity – about as much as 2000 homes. But future supercomputers? “Projections say that at the end of the decade, we’d be at 100 megawatts if we continue,” says Shalf.

That’s enough power for a small city, about the size of Novato. The electricity bill alone would be roughly 100 million dollars a year.

“What that says is our current approach to doing supercomputing is dead end. And that we need to think of dramatically new ways to improve the efficiency of computing,” Shalf says.

That could be done with some very familiar technology. Cell phones have computer chips inside them, but not the same chips as desktop computers.

From Peter M. Kogge, "ExaScale Computing Study: Technology Challenges in Achieving Exascale Systems," Sept. 28, 2008

“For as long as they’ve existed, they’ve wanted a cell phone that would last longer, be less expensive,” says Shalf.

To do that, chips in cell phones have had to be smaller and more energy efficient. So Shalf says, why not build a supercomputer with chips that combine millions of these simple cell phone processors, specially designed for scientific jobs? In other words, use cell phone technology to make the world’s most powerful computers.

“We’re able to demonstrate an additional 80 times more energy efficiency than business as usual, and that gets us within striking distance of where we need to be to build a practical supercomputer,” he says.

Instead of a 100-megawatt supercomputer, it would be a three to ten megawatt computer. Whether or not it gets built depends on chipmakers like AMD and Intel, who would design the chips. But Shalf says a supercomputer with that power could make a big difference in climate change science.

“It enables policymakers to have the tools they need to make important decisions that have trillion dollar consequences. And that’s why you want to build a supercomputer that’s able to do this.”

Berkeley Lab hopes to use the supercomputer to better predict some of the trickier impacts of climate change – like changes in rainfall patterns, ice sheet melt and the effects of clouds.

John Shalf of Lawrence Berkeley National Lab stands inside the Hopper supercomputer.

John Shalf of Lawrence Berkeley National Lab stands inside the Hopper supercomputer.