By Lillian Mongeau

To get a better understanding of how well students can solve complex problems and apply science to real-life scenarios, the National Assessment for Education Progress recently used hands-on experiments as a way to test 4th, 8th, and 12th grade students, and found that this kind of assessment gives a much more accurate reflection of student comprehension.

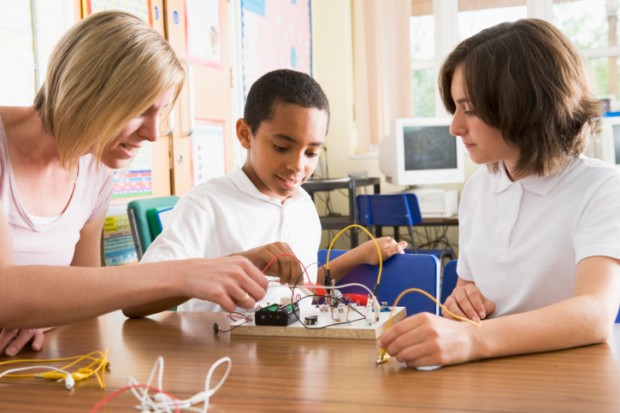

Results from a 2009 round of testing called The Nation's Report Card Science in Action: Hands-On and Interactive Computer Task, examined 6,000 students—2,000 at each grade level—from across the country. Students performed tasks like testing water samples (12th grade) and assembling electric circuits (4th grade). They also participated in interactive computer tasks that simulated longer term experiments, like observing plant growth. In both scenarios, students were evaluated on their ability to perform the tasks, observe the results and draw conclusions.

“The bottom line is, we learned so much more that we couldn’t have learned from those paper and pencil tests,” said Jack Buckley, commissioner at the National Center for Education Statistics, which creates the annual “Nation’s Report Card” based on the results of tests like this one administered by the National Assessment for Educational Progress (NAEP).

But what they learned was a mixed bag.

A majority of students at all grade levels (76 percent) were able to perform the simpler experiments correctly and accurately observe the results. However, when experiments involved more complicated data sets, students’ ability to execute and observe fell sharply -- only 36 percent of students tested across grade levels were able to complete the tasks under these conditions.