The intersection of women and games has been getting a ton of column inches lately. Sexist working environments, problematic armor on female characters, the success of Nintendo’s new majority female dev team, and even the dearth of female enemies in games. We have seen tons of new voices joining in to talk turkey on gender in games. But I noticed something: lots of women, especially when giving talks at conferences, whip out images of themselves playing video games as kids. It might be a faded picture of a little girl, with bed head and one sock, propped up on a mountain of pillows hammering Sonic the Hedgehog moves into a controller far too big for her hands. Or a pair of sisters, each wearing one of dad’s shirts hanging to their knees, fighting over turns at the keyboard. The pictures are cute and heartwarming and are frankly not helping.

I love people who discover at age 7 an all-consuming passion for video games, work hard and grow up to become world class game developers. There’s nothing wrong with a childhood shaped by gaming, it just isn’t the only option. We all get nostalgic for games. Personally, I have a touchy-feely longing for my first time with Oblivion. It was my first foray into an Elder Scrolls game and it blew me away. You mean I get to be an elf and sneak around with a giant bow shooting the heads of goodies and baddies from the shadows? Wait and there are a library’s worth of books to read in-game, packed with lore and backstory? Sign me up! It wasn’t the first video game I’d ever played; I’d fiddled with friends’ Nintendos and washed a few sticky arcade standups with waves of frustration, but Oblivion was the first game that felt like it had been made just for me. I was 21.

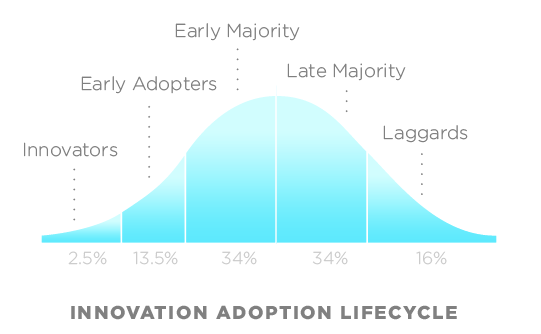

Yup. I didn’t get into games until after college. Shock! Flabbergast-ration! Accusations of being a dreaded ‘Late Adopter!’ Sure I picked up the passion as an adult, but late isn’t lousy.

In the lifecycle of the adoption of a technology, I’m am what sociologists call the early majority. I usually wait until the second generation of a console or phone, just so they can get the kinks out before I jump in. No red ring of death for me, thank you very much. And good thing too, because technology, especially of the communication and entertainment varieties, was never meant to be only for early adopters. Smart phones, just as with video games, spread from early ‘disease’ vectors to the population as a whole, the barest definition of a technology’s memetic success.